From specs that rival Nvidia’s $30,000 H100, to who’s making it, and the big twist nobody saw coming — here’s everything you need to know about Tesla’s most powerful chip ever.

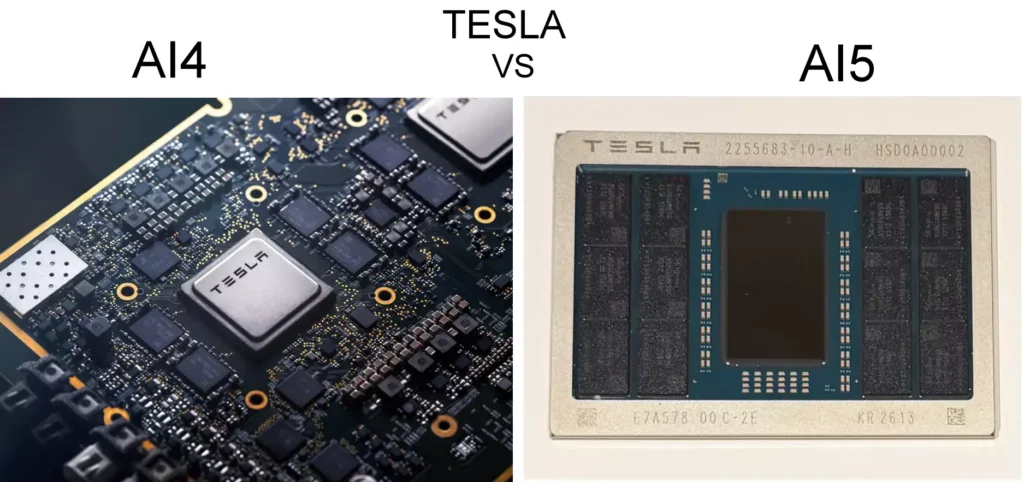

Okay, I have to admit — when Elon Musk woke up at 3 AM on April 15, 2026, and fired off a congratulatory post on X, I was genuinely excited. “Congrats to the @Tesla_AI chip design team on taping out AI5!” the post read, alongside a photo of a sleek chip module that looked more like a piece of jewelry than the future of autonomous driving.

And honestly? This is a big deal. Not just for Tesla, but for anyone who cares about where AI hardware is headed — whether you drive a Tesla, own a Model Y, or are just fascinated by the chip wars happening right now between Silicon Valley giants. If you’ve been curious about the Tesla AI5 chip — what it is, what it does, who makes it, and how it compares to the old hardware — you’ve come to the right place. Let me break it all down.

Table of Contents

First, What Does “Taping Out” Actually Mean?

If you’re not deep in the semiconductor world, the phrase “taped out” might sound like Tesla wrapped something in duct tape and called it a day. In reality, it’s one of the most significant milestones in chip development.

Tapeout is the semiconductor industry’s term for the moment a chip’s design is finalized and sent to the manufacturing foundry. Think of it as pressing “submit” on the most complex engineering assignment in the world. After tapeout, the chip moves into physical fabrication, testing, validation, and eventually mass production. It’s not the finish line — but it’s the last major design checkpoint before silicon becomes real.

For Tesla’s AI5, this is huge. The chip has been in development for years, through multiple delays and shifting timelines. The fact that it’s now heading to the fab is genuinely meaningful progress.

Congrats to the @Tesla_AI chip design team on taping out AI5!

— Elon Musk (@elonmusk) April 15, 2026

AI6, Dojo3 & other exciting chips in work. pic.twitter.com/hm54TdIzBx

What Is the Tesla AI5 Chip?

The Tesla AI5 — also referred to as Hardware 5 or HW5 — is Tesla’s next-generation custom system-on-chip (SoC), designed primarily for real-time AI inference. It’s the brain that Tesla wants to power its autonomous systems: Full Self-Driving (FSD), the Optimus humanoid robot, and large-scale AI training supercomputers.

What makes this chip different from the chips you’d find in your phone or laptop is that it’s built from the ground up for Tesla’s specific workloads. It’s optimized for edge inference — making smart decisions in real time, on the device, without needing to send data to the cloud. That’s critical for an autonomous car that needs to react to a pedestrian in milliseconds.

“AI5 will punch far above its weight because the entire Tesla AI software stack is designed to make maximally effective use of every circuit. We co-designed our AI software and hardware.”— Elon Musk, March 2026

The chip drops what Musk calls “legacy hardware blocks” in favor of radical simplicity — heavy use of INT4, INT2, FP8, and mixed-precision tensor accelerators that are perfectly matched to the types of neural networks Tesla runs. It’s not a general-purpose chip trying to do everything. It’s a laser-focused tool built for one ecosystem.

Tesla AI5 Chip — Full Specifications

Here’s where things get genuinely exciting. The performance numbers Elon Musk has shared are, frankly, staggering. Let me put them in one place for you:

40×

Faster than AI4 (select scenarios)

5×

Compute vs dual AI4

9×

More Memory

2,500

TOPS (estimated)

| Specification | Tesla AI5 (HW5) | Tesla AI4 (HW4) |

|---|---|---|

| Architecture | Single SoC design | Dual SoC design |

| Total TOPS | ~2,000–2,500 TOPS | ~300–500 TOPS |

| Memory | 9× vs HW4 | 16 GB RAM (HW4) |

| Memory Bandwidth | 5× vs HW4 | Baseline |

| Power Draw | Up to 800W (complex) | Up to 160W |

| Memory Packages | 12× SK Hynix (GDDR6/7) | Standard |

| Nvidia Equivalent | H100 (single) / B100 (dual) | — |

| Precision Support | INT4, INT2, FP8, mixed | Standard precision |

| Volume Production | Mid-2027 (targeted) | Active since 2023 |

To give you a sense of scale: Nvidia’s H100 GPU — the gold standard of AI data center compute — costs around $30,000 and needs a chilled server room to operate. Tesla’s AI5, by Musk’s claim, delivers comparable inference performance for Tesla’s specific use case, fits behind your glovebox, runs off your car battery, and costs a fraction of the price. That’s remarkable engineering if it holds up.

Also Read EV Chips Explained: Tata Motors Hydrogen Fuel Cell Bus

Who Manufactures the Tesla AI5 Chip?

This is one of the more fascinating parts of the AI5 story — and something not many articles have gone deep on. Tesla isn’t relying on a single foundry. For AI5, they’ve signed on both TSMC (Taiwan Semiconductor Manufacturing Company) and Samsung Foundry to produce the chip.

Why two manufacturers? It comes down to supply chain resilience and scale. Musk himself said he wants AI5 to become “one of the most produced AI chips ever.” Betting everything on one fab is a risk Tesla isn’t willing to take, especially after the semiconductor shortages of the early 2020s rattled the entire auto industry.

TSMC vs Samsung — Will They Be Identical?

Here’s the nuance: the two versions of AI5 will be physically different. Each foundry uses its own transistor libraries, routing rules, and manufacturing processes. But the goal, Musk has stated clearly, is that the AI software running on both versions is completely identical in behavior.

This might sound familiar — Apple faced a similar situation with its A9 chip back in 2015, dubbed “Chipgate,” where Samsung-built A9 chips showed slightly shorter battery life than TSMC-built ones. Apple put the variance at around 2–3%. Tesla is aware of this history and is apparently engineering around it from the start.

⚡ Building Tesla’s Own Fab — Terafab Beyond TSMC and Samsung, Tesla and SpaceX announced the $25 billion “Terafab” project in Austin, Texas in March 2026. In April 2026, Intel officially joined Terafab to handle chip fabrication and packaging. This is Tesla’s long-term play for vertical integration — owning its own semiconductor manufacturing at scale, much like Apple’s shift to Apple Silicon has given it unprecedented control over its product line.

Samsung already has a history with Tesla — it fabricates the current AI4 chip and reportedly secured a major multi-year manufacturing deal with the company in 2025. Adding TSMC brings in the world’s most advanced process nodes and manufacturing expertise.

Also Read EV Chips Explained: 2027 Nissan Juke EV — Everything You Need to Know

Old vs New: Tesla Chip Evolution — AI3, AI4, and Now AI5

To truly appreciate what AI5 represents, you have to look at where Tesla started. The company’s chip journey is a story of increasingly bold bets on in-house silicon — and it’s worth tracing from the beginning.

Hardware 1 & 2 — The Third-Party Era

Early Tesla vehicles (pre-2019) used chips from Mobileye and Nvidia. The Mobileye EyeQ3 was the brain of Hardware 1, built on a 40nm process with a laughably modest 2.5W power draw. Nvidia’s Drive PX 2 followed with Hardware 2. Tesla was entirely dependent on external silicon — something Elon Musk viewed as an existential limitation.

Hardware 3 (AI3) — Tesla Goes Custom

In 2019, Tesla unveiled its first fully custom FSD chip, designed in-house and fabbed by Samsung on a 14nm process. Designed by legends like Jim Keller and Pete Bannon, HW3 processed images at 2,300 frames per second — a 21× leap over its predecessor. Each chip packed 72 TOPS of compute, and cars came with two for redundancy.

Hardware 4 (AI4) — The Current Standard

Launched in January 2023, AI4 brought dramatically higher camera resolution, 16GB RAM, and 256GB storage — double and quadruple the amounts in HW3. Musk described it as 3–8× more powerful than AI3. Today, every new Tesla — Model 3, Model Y, Model S, Model X, Cybertruck — ships with AI4.

Hardware 5 (AI5) — The Generational Leap

And here we are. AI5 is the most dramatic generational jump in Tesla’s chip history. One architectural shift stands out more than any spec: Tesla is going from dual chips to a single chip.

“Switching from doing 2-chip architectures to 1 means all our silicon talent is focused on making 1 incredible chip. No-brainer in retrospect.”— Elon Musk, on X

In AI3 and AI4, Tesla used two chips per car — one for primary processing, one for redundancy. AI5 moves to a single-chip architecture, which signals something important: Tesla now trusts the reliability of its own silicon enough to not need a backup. That’s a statement of engineering confidence, not just a cost-cutting exercise.

| Generation | Key Specs | Manufacturer | Status |

|---|---|---|---|

| HW1 (Mobileye) | EyeQ3, 2.5W, 40nm | Mobileye | Discontinued |

| HW2 (Nvidia) | Drive PX 2 | Nvidia | Discontinued |

| HW3 / AI3 | 72 TOPS × 2, 14nm | Samsung | Legacy |

| HW4 / AI4 | ~300–500 TOPS, 16GB RAM | Samsung | Current (Active) |

| HW5 / AI5 | ~2,500 TOPS, 9× RAM | TSMC + Samsung | Taped Out (April 2026) |

The Twist Nobody Expected: AI5 Isn’t Going Into Cars First

Okay, here’s the part of this story that genuinely surprised me — and surprised a lot of Tesla watchers too.

When a user on X asked Musk whether AI5 would be heading into cars or robots, his reply was brief and paradigm-shifting:

“Optimus and our supercomputer clusters. AI4 is enough to achieve much better than human safety for FSD.”— Elon Musk, April 15, 2026

Let that sink in. Tesla’s most powerful chip ever — the one Musk called “existential” to the company’s survival — is being directed first at its humanoid robots and data center training clusters, not at the cars.

Why? Because Musk is making a bet: that the current AI4 hardware is already capable of achieving fully unsupervised, Level 4+ self-driving. The cars don’t need AI5 to drive safely. But Optimus — Tesla’s humanoid robot that needs to perform complex physical tasks in the real world — absolutely does. And so do the supercomputers Tesla is building to train ever-larger AI models.

This is actually a shrewd business move. It means the hundreds of thousands of HW4-equipped Teslas already on the road don’t get left behind. It means Tesla doesn’t face the logistical nightmare of mass vehicle retrofits. And it frees up the most powerful silicon for the applications where it’s truly needed.

Tesla AI5 — Full Development Timeline

2024

June 2024 : Elon Musk announces AI5 at Tesla’s annual meeting, promising delivery in vehicles by the “second half of 2025.” The hype begins.

2025

July 2025 : Musk says AI5 design is “finished” — widely interpreted as nearing tapeout. Turns out, there’s more road ahead.

November 2025 : Tesla announces AI5 volume production is pushed to mid-2027. Cybercab is confirmed to launch on AI4 hardware. An AI4.5 stopgap chip quietly appears in new Model Y vehicles.

January 2026 : Musk says design is “almost done” — six months after previously saying it was finished. Samsung and TSMC confirmed as dual manufacturers.

March 2026 : Tesla and SpaceX announce the $25 billion “Terafab” chip fabrication project in Austin, Texas.

April 15, 2026 : 🎉 AI5 officially taped out. Musk confirms AI6, Dojo3, and “other exciting chips” are already in development. First samples expected late 2026.

What Comes After AI5? AI6, Dojo3, and Beyond

Even as the champagne (metaphorically) pops for AI5, Tesla is already looking ahead. In the same post announcing the AI5 tapeout, Musk confirmed that AI6 and Dojo3 are actively in development. Here’s what we know:

Tesla AI6

AI6 is expected to offer roughly twice the performance of AI5. Samsung already secured a deal to produce AI6 chips, and tapeout is targeted for December 2026. Volume production could begin as early as mid-2028. Interestingly, Musk has indicated AI6 is also primarily targeted at Optimus and data centers — not vehicles — suggesting AI4 really may be the last automotive silicon upgrade for a while.

Dojo 3

Perhaps the most ambitious part of Musk’s announcement was the confirmation that Dojo 3 is back on the table. Dojo is Tesla’s wafer-level supercomputer chip designed for AI training — not inference. Reports last year suggested the Dojo program had been abandoned after key team members departed. Apparently not. Dojo 3 is being optimized for what some sources describe as orbital data centers — compute clusters potentially deployed in space to bypass Earth-based power grid constraints. Wild? Yes. Tesla? Also yes.

🔮 Nine-Month Chip Cadence Musk has floated the idea of a nine-month development cycle for subsequent AI chip generations — a cadence that would make Tesla’s chip roadmap faster than Nvidia’s or AMD’s annual release cycles. Whether that’s achievable for major architectural overhauls remains to be seen, but the ambition is there.

Fast Thinking, But What About Accuracy and Safety?

Raw compute power is impressive. But in the context of self-driving cars, speed without accuracy is actually dangerous. This is the question that researchers and safety experts continue to raise about Tesla’s approach.

Tesla’s strategy is to build an end-to-end AI model — trained on billions of real-world driving miles collected from its customer fleet — that can handle virtually any driving scenario. More compute means larger models, longer context windows, and the ability to process more history from each drive in real time.

But there are important caveats. Regulatory approval for unsupervised autonomy — Level 4 or Level 5 — isn’t just a hardware problem. Agencies like the NHTSA require exhaustive validation, liability frameworks, and demonstrated public safety over millions of edge cases: construction zones, emergency vehicles, adverse weather, unusual road markings.

Even if AI4 (and certainly AI5) has the raw computational horsepower to process driving scenarios better than any human, the gap between “technically capable” and “regulatorily approved” remains wide. Tesla’s FSD v14, running on AI4 hardware, is still supervised — meaning drivers must remain attentive and ready to intervene.

Accuracy — not just speed — is the real benchmark. A chip that processes bad decisions faster is not progress. The AI5’s 9× memory advantage is particularly relevant here: larger memory means Tesla can run models with much longer context windows, giving the car a more complete understanding of how a scene is evolving over time. That’s where meaningful safety gains come from.

Should Tesla Buyers Wait for AI5?

If you’re considering buying a Tesla today and wondering whether to hold out for AI5 hardware — here’s my honest take.

Consumer vehicles are not expected to receive AI5 until 2027 at the earliest. The Cybercab robotaxi, launching in Q2 2026, will run on AI4. Your current Model 3 or Model Y with HW4 is not obsolete — it’s the hardware Tesla is actively developing and validating FSD on, for millions of vehicles globally.

Will AI5 eventually come to vehicles? Almost certainly. Will it be a meaningful upgrade when it does? Absolutely — 5× more compute, 9× more memory, and support for much larger neural networks will matter. But that timeline is 2027+, and Tesla’s history of chip transitions (HW3 to HW4 took months before HW4 even had FSD access) suggests you won’t miss out dramatically by buying today.

The more interesting consideration is the pattern Electrek and others have noted: every new chip generation makes the previous promise feel a little further away. HW3 owners were promised full self-driving. HW4 took over. Now AI5 is here, and some argue the goalposts have moved again. That’s a legitimate concern worth keeping in mind as a buyer.

Final Thoughts — Tesla’s AI5 Is a Landmark Moment

I’ve been covering the automotive and technology industry for a while now, and the Tesla AI5 tapeout genuinely feels like a landmark. Not because it solves self-driving tomorrow, or because it’ll be in your car next year. But because it marks the moment Tesla stopped being just a car company and became, credibly, one of the most serious AI hardware players on the planet.

A chip that rivals Nvidia’s $30,000 H100 for a fraction of the cost, manufactured at scale by both TSMC and Samsung, with a roadmap that includes orbital data centers and humanoid robots — that’s not a car company’s side project. That’s a technology company’s core bet on the future.

Whether you’re a Tesla owner, an EV enthusiast, or just someone watching the AI hardware race unfold, the AI5 is worth paying attention to. The next chapter — AI6, Dojo3, Terafab — is already being written. And if Tesla pulls this off at the scale Musk is describing, the implications go far beyond what car you drive to work.

Stay tuned to AutoAkhbar.com — we’ll be covering every development as it happens.

What is the Tesla AI5 chip?

The Tesla AI5 chip is commonly used to refer to Tesla’s AI5 chip (the “A15” name is a typo popularized by some tech blogs). It is Tesla’s fifth-generation custom AI processor, designed for Full Self-Driving, Optimus robots, and AI data center use.

What are the Tesla AI5 chip specifications?

Tesla AI5 delivers approximately 2500 TOPS of AI compute, 144 GB of memory, and claims 40x system-level improvement over the current HW4. It features a multi-die design with 12 SK Hynix DRAM modules.

Who manufactures the Tesla AI5 chip?

The Tesla AI5 chip is manufactured by both TSMC and Samsung. Tesla is also building its own TeraFab facility in Austin, Texas, for future in-house production.

When will Tesla AI5 chips be in cars?

High-volume production is targeted for mid-to-late 2027. Tesla has stated that the current AI4 / HW4 chip is sufficient for FSD safety goals, so AI5 will first be used in Optimus robots and data centers.

How does Tesla AI5 compare to Nvidia chips?

Tesla claims that a single AI5 chip rivals Nvidia’s Hopper (H100) in inference performance for its specific workloads, and a dual-chip AI5 configuration competes with Nvidia’s Blackwell — but at significantly lower cost and power consumption.

Jyoti Sharma

Co-Founder & Automotive Content Strategist | AutoAkhbar

Jyoti covers the intersection of automotive technology and artificial intelligence at AutoAkhbar. She has been following Tesla’s hardware roadmap since the HW3 era and believes the best cars are the ones that keep getting smarter over time. When she’s not writing about chips, she’s probably arguing about whether range anxiety is still a real thing.

📍 India | 🚗 EV Trends • Automotive News • SEO Strategy